AI... in Space!

An exercise in finding out how many ways physics says no to orbital datacenters.

Published: March 10, 2026

Tweets to Reality

Elon Musk has been talking a lot about space-based AI datacenters as a way to power our chatbots of the future. In 280 characters it sounds almost annoyingly perfect: constant solar power, unlimited room, no neighbors to complain, no permits, and a solution only SpaceX can provide.

It's the kind of idea that makes twitter futurists feel clever. Which is usually a sign that someone needs to do the math.

As someone with a mechanical engineering degree, some professional exposure to both space and AI, and a very strong reflex against slide-ware and buzzwords, I decided to build a model and see whether this makes even remote physical or economic sense.

I tried to be fair. I started from first principles, made generous assumptions where I could, and let the physics make the argument for me.

The result is not subtle: orbital datacenters are not a clever solution to AI infrastructure. They are what happens when you take an already difficult system and move it to the least forgiving environment possible.

I started with this notebook to verify and model all calculations, sources for prices as well as other model considerations are given there. I also built an interactive explorer where you can tune the key assumptions yourself and see how the numbers shift.

GPU Specs

To keep this model grounded, I needed a reference system. I used NVIDIA's GB200 NVL72 rack: 72 Blackwell B200 GPUs, about 120 kW of compute, and about 3,000 kg on Earth. This is the latest and greatest that you can get today.

| GPU Rack | GPUs | Compute Energy | Weight (On Earth) | Weight (Space Mode) |

|---|---|---|---|---|

| NVIDIA GB200 NVL72 | 72 | 120 kW | 3,000 kg | 750 kg |

For the orbital version, I stripped out the terrestrial cooling hardware, enclosure, and as much structural dead weight as I could justify, taking that down to roughly 750 kg in what I'll call Space Mode™.

The goal is simple: take the same kind of chips you'd use in a terrestrial inference* datacenter and ask what it would take to build the orbital equivalent.

*Inference in AI means serving already trained models. This will have lower power demands per chip and is the part of AI that will need the most scaling. This is a concession made by Dwarkesh's steel-manning of orbital datacenters.

From here, everything reduces to two questions: how do you power the GPUs, and how do you get rid of the heat?

On Earth, power is the hard part. Solar has clouds and nighttime, gas turbines come with permitting fights, and grid interconnection is slow and painful. Cooling, by comparison, is relatively manageable because air and water easily move that heat elsewhere.

In space, that trade flips. Power is easier to find. Cooling is where the fantasy starts to fall apart.

Is Space Cold?

This is where the phrase "space is cold" stops being useful and starts being misleading.

Space is not cold in the way a server room is cold. It is a vacuum. And vacuum is excellent if your goal is to keep coffee hot in a thermos and prevent heat transfer, which is unfortunately the opposite of what a GPU rack wants.

On Earth, datacenters dump waste heat into moving air or water and let convection do the hard work. In orbit, convection is gone. Every watt your GPUs consume eventually becomes heat, and the only way to get rid of it is radiation. The best way to radiate heat… is with a radiator. We can model the size (and weight) of the radiator needed to keep our GPUs from melting themselves.

Heat radiation has nothing to do with nuclear radiation, it's a measure of the amount of energy as light (usually not visible) given off by an object. On Earth this is a very small amount but in space it's our only hope.

Here is our first equation. Radiation is given as:

\[q_{emit} = \epsilon\,\sigma\,k_{eff}\,(T_{rad}^4 - T_{sink}^4)\]We're using \(q_{emit}\) as how much energy we emit per area of radiator so that we know how big of a radiator we need to attach to our orbiting GPU rack. \(T_{rad}\) (radiator temperature) we can try to fluctuate and \(T_{sink}\) (space temperature) is constant, the rest of the variables are constants and efficiency numbers.

When we use a realistic temperature for the radiator which will be the temperature of the GPU minus some resistance loss from the chip to the radiator material we see how key high temperatures are for making this radiator work.

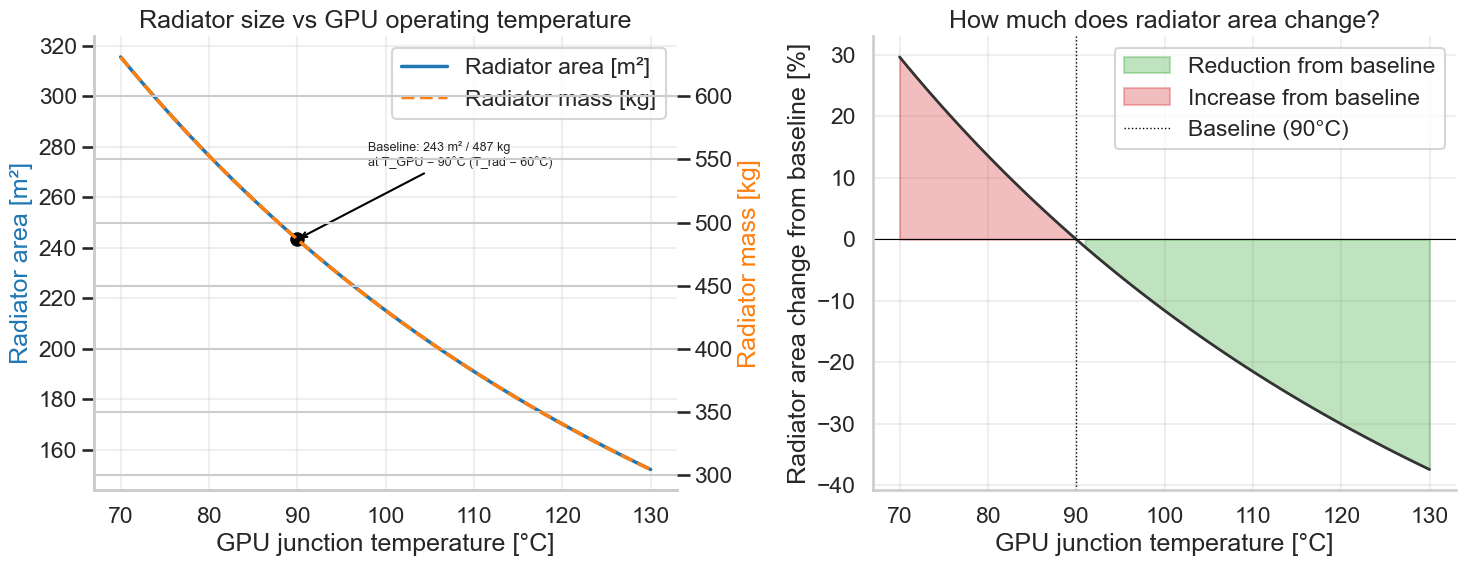

So the hotter the GPU the more efficient our radiator. NVIDIA specifies that the maximum temperature for their B200s is \(83^oC\), we can hope that with some modifications or a future NVIDIA chip, we'll be able to operate a little bit hotter.

In my model I chose \(90^o C\) for the GPU operating temperature and a \(30^o\) drop off temperature to the radiator to account for internal resistance. You can see my baseline selection on the left chart, which give a \(243.4 \, m^2\) area and a \(486.7 \, kg\) mass using an optimistic aluminum honeycomb radiator.

The right chart shows that if we vary this GPU temperature, we can improve our area of radiator (and thus cost) quite a bit.

The conclusion you can make here is that for each satellite, you need about a tennis court worth of area of exotic constructed aluminum in addition to your GPUs to launch into space, as opposed to… some fans.

Sunshine Fractions

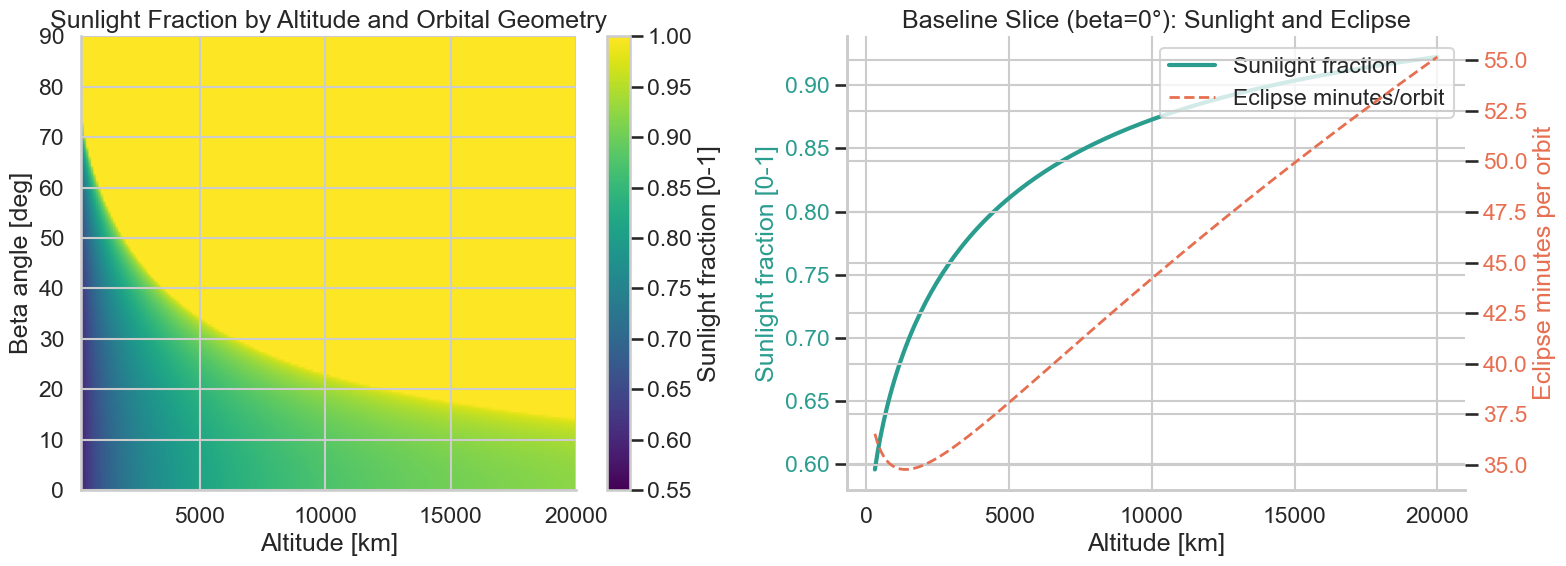

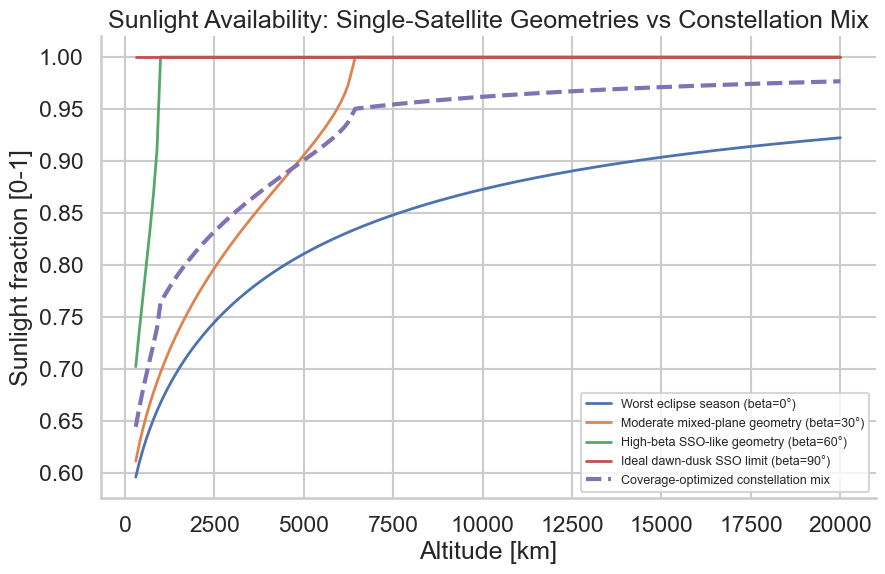

The next consideration to make for our satellites is how often are they getting sunshine (and thus power). You can absolutely make satellites that get 24/7 sunshine in polar orbit. In our model we use a mix of orbits to not crowd any one area which in theory would improve communication and make placing them easier. Assuming we have perfect inter-satellite data links and down to earth (a big but reasonable assumption for SpaceX/Starlink) we can create a constellation with an average sunlight of >95% to support continuous uptime.

As you go in higher elevation you get more sunlight too, as the earth becomes smaller to the satellite and doesn't block the sun as often. Going higher also means it costs more money to get a satellite there, so we can try to minimize that.

On the left we can see as we vary beta (angle of orbit to the sun 0 being around the equator, 90 being around the poles but looking at the sun) and altitude we can get different percentages of sunlight time. On the right shows the fraction of sunlight time for a equator orbiting satellite as we increase altitude.

In low orbit, the Earth gets in the way a lot. Satellites regularly pass through eclipse, which means no sunlight and no solar generation.

You can solve that with batteries, but batteries are just more mass, which means more launch cost. So instead of pretending batteries are free, I modeled a constellation that gets most of its uptime from orbit selection and geometry.

Using the modeling in my notebook I chose a mixed plane constellation with an average altitude at 7,000m and a beta breakdown below to create a constellation with >95% sun time and even coverage.

| Beta | Constellation weight |

|---|---|

| 0° | 30% |

| 30° | 45% |

| 60° | 20% |

| 90° | 5% |

This chart helped me visualize the trade offs:

Getting There Is Half the Problem (and Most of the Cost)

This is where orbital compute stops sounding futuristic and starts sounding expensive.

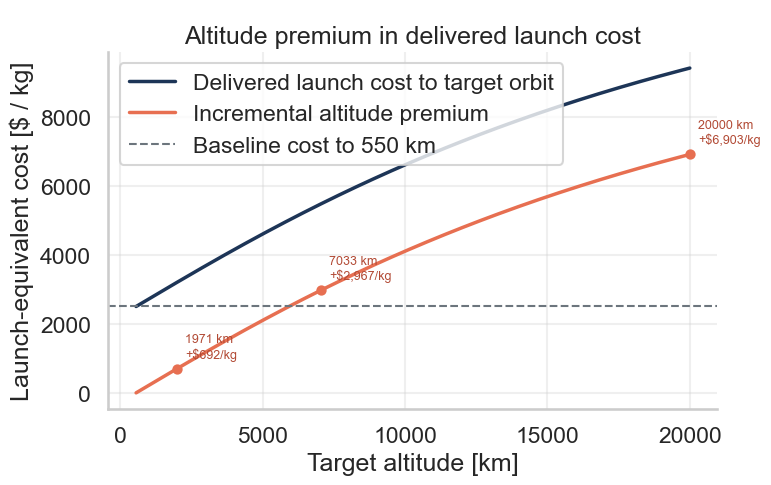

It's no mystery that it costs a lot to get things into orbit. SpaceX has done a lot to drive that cost down to the point where we're even discussing putting a datacenter in space. But the cost is not 0, and when you factor in the weight of what you're putting up there, plus the higher orbit, buying the entire state of Nevada for your datacenter starts to make sense.

First let's look at quoted costs in 2026. SpaceX will charge you $2,500 per kg to get you to 550km orbit. Then from there we will have to transfer to a higher orbit by burning additional energy to get to 7,000km, a Hohman transfer. Realistically SpaceX will probably put the entire stage at 7,000km but that additional transfer makes sense to model as the process is the same it will just mean all satellites stay in the final rocket stage until then while the rocket itself performs this transfer.

Performing this transfer to take the satellites to higher orbits, means more fuel, which means more mass, which means higher cost. Instead of $2,500 to orbit, we're more like $5,500 per kg to orbit, more than double the price.

In order to get to higher orbits you have to burn energy and go faster at your initial orbit, that will be implied in the calculation below.

\[c_{\text{delivered}}(h) = c_{550} \times \frac{\text{kg launched to 550 km}}{\text{kg delivered to altitude } h}\]Because moving to a higher orbit costs energy, we have to bring that energy up to the 550km orbit to begin with. You can see how this would be recursive, for every 1kg of mass to our orbit, we have to bring an extra 1.2 kg of fuel to get to 7,000km which means we are paying for the launch of 2.2kg for every 1kg to where we want it to be.

Scaling Laws… in Space

So how much would all of this cost at scale? All of this is possible, it just comes with costs (that I think make no sense).

For scale I've specified a 200MW maximum output datacenter. 200 Mega-Watts is incredibly big but it is replicated on earth. The OpenAI datacenter in the UAE is 200MW as of 2026, the xAI Memphis datacenter is currently 150MW.

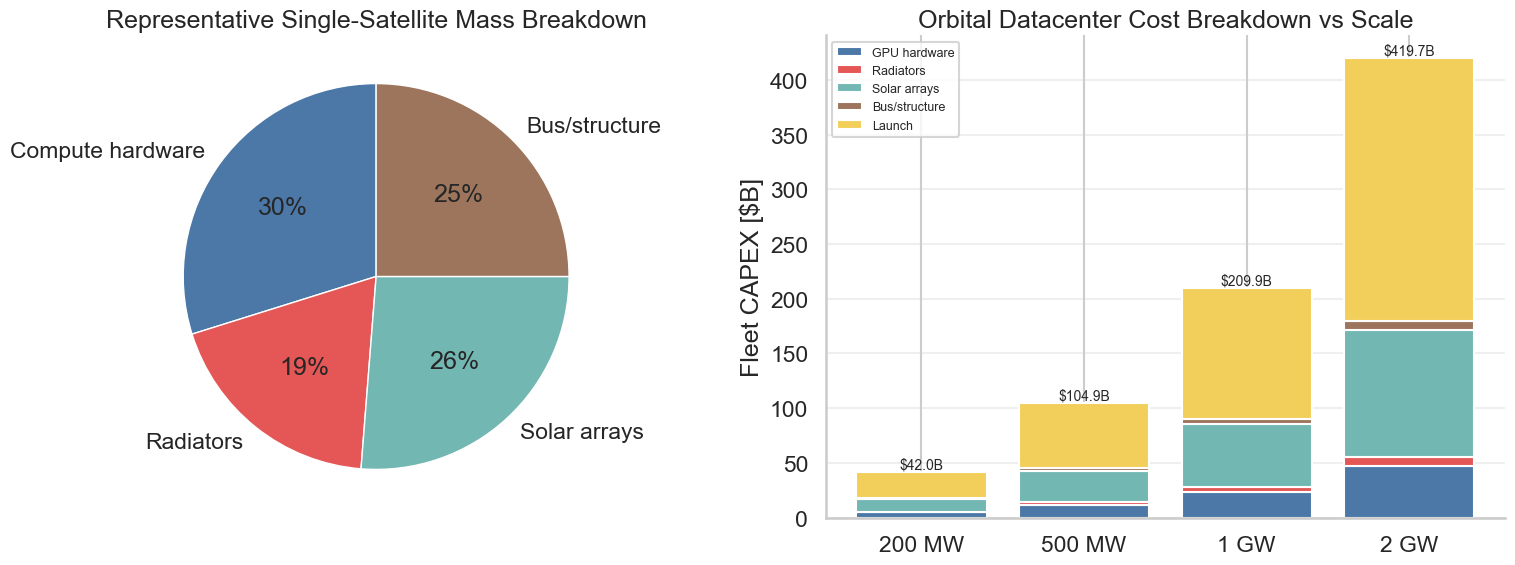

Here are the calculated numbers. With GPUs, solar arrays, radiator, and other networking overhead, one satellite is 2,514kg with 8 B200 GPUs. Going to 7,000kms of elevation will cost $13.7M per satellite. The total cost of each satellite is $24M. In order to assemble a 200MW datacenter we need 1,750 satellites (0.114MW per satellite/8 B200s) bringing our total orbital data center cost to $42B. For perspective the OpenAI "Stargate" data center is budgeted for $500M to reach 10GW of compute, so $50M per Giga Watt compared to our $210M per GW.

The most useful way to think about this is the space premium: Out of the $42B total $4.72B is GPU cost. The remaining $37.25B is the premium you pay for launch, solar arrays, radiators, and all the other equipment required to keep the exact same compute alive in space.

That means the overwhelming majority of the project cost is not compute. It is self-inflicted orbital inconvenience.

Below is a breakdown of the mass for each satellite along with the costs of other datacenter sizes.

Using the "Space Premium" we can decide if it's worth it to do this at all. With that same budget, could you buy land, secure power, build cooling, bribe regulators and politicians, and stand up a terrestrial facility of the same size?

Easily.

Not a Serious Proposal

So my conclusion is not just that orbital datacenters are uneconomic today. It's that, under anything resembling present-day physics and present-day engineering, they are not a serious proposal.

If someone wants to advocate for them anyway, they need to be very specific about which constraint they expect to change.

Is launch cost collapsing by an order of magnitude? Are solar arrays becoming dramatically lighter? Is heat rejection in vacuum somehow getting easier? Which aspect of reality, exactly, stops mattering in the future?

If you cannot point to the specific breakthrough doing the work, then "AI datacenters in space" is not a plan. It is just the word space slapped on to a popular industry in order to sound like a futurist.

The numbers are not subtle here. You are taking one of the most power-hungry, heat-constrained industrial systems humans build and relocating it to an environment with no air, impossible maintenance, brutal launch costs, and zero tolerance for mistakes.

That is not visionary. It is a normal datacenter plus orbital mechanics.

Could some future world make this less ridiculous? Maybe. But that future would require meaningful changes in launch economics, power system mass, and possibly thermal management itself.

Until someone can say exactly what those changes are, and defend them quantitatively, orbital datacenters deserve to be treated the same way we treat most bad futurist ideas: as branding exercises wearing a lab coat.

So that you're not just taking my word for it, I built an interactive Space Datacenter Explorer where you can change launch cost, solar array power, and other assumptions yourself.

I think that's the only serious way to discuss this idea. Not with vibes and not hand-wavy about future innovation; by naming the variables and showing which miracles you are counting on.